I agreed to do a series of video interviews with notable scholars at the Annual EDEN Conference in Oslo earlier this month, and I was really spoilt for choice. There were so many prominent distance education and e-learning practitioners and theorists in attendance, it was a little difficult to know where to start. I managed to get Professor Michael G Moore (formerly of Madison-Wisconsin and Penn State Universities) to sit down and have a chat with me about his life in distance education, the history of the subject and his own experiences as a learner. In first met Michael at a conference in Ankara, Turkey in 1998, and our paths have crossed many times since. He is well known as one of the pioneers of distance education, one of the original team of academic consultants working with the British government to establish the Open University in the 1960s, and latterly, as the long serving founding editor of the American Journal of Distance Education. He is also credited with the theory of transactional distance, which has influenced many studies and publications on the topic over the last 30 years. Michael is quite simply an icon of distance education, and it is worth sitting for a few minutes and hearing what he has to say. Here's the video:

An interview with Michael G Moore by Steve Wheeler is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Unported License.

Earlier today at the EDEN Annual Conference at the University of Oslo, I interviewed June Breivik. June, who is at the Norwegian Business School will be presenting a keynote speech tomorrow at the event on 'Disruptive Education.' She has some strong views on how education needs to be changed, and believes that disruption is necessary to challenge the current paradigms of teaching in higher education. She sees the teacher role changing, and argues that technology is a driving force in that change. She is not technologically deterministic, but sees specific technologies - social media tools such as blogging and Twitter - as a means of liberating learners (and their teachers) into a new way of creative communication, and a new means of representing knowledge. June feels that students should take centre stage in the learning process and that teachers should cede the 'power over the learner' they have held for so long. She takes a positive view on the idea of the Flipped Classroom, and she also practices it in her own professional context. It is refreshing to know that June believes in walking the talk. The brief video interview below provides a fascinating primer for what she will present to the EDEN delegates tomorrow morning here in Oslo.

This post is mirrored on the official EDEN Conference blog

Disruptive education by Steve Wheeler is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Unported License.

If we scratch just below the surface of education, and we examine the nature of knowledge, we see an interesting challenge. It is increasingly apparent that knowledge as we 'know it' is inextricably linked to those who are in control of it. The knowledge gate-keepers have been in charge for some time, and knowledge is power. But all of this is already changing, as those beyond the inner circle begin to understand that through technology, they can create knowledge too. Our conceptions of knowledge could be said to be in a state of flux and uncertainty. If we accept that there is no monopoly anymore we need to ask some questions. In an age where anyone with an internet connection can create content, who now decides what we accept as a 'fact', and who is in control of our representations of reality?

Evidence from a number of sources has indicated that our conceptions of knowledge are indeed changing and that new and emerging technologies have a key role in the process (Guy, 2004; Lankshear and Knobel, 2006; Kop, 2007; Kress, 2009). Personalised tools lead to personalised learning, and the impact of this should not be underestimated. It is clear that tacit, informal knowledge resists explicit, formal knowledge. This is largely due to the fact that tacit knowledge includes the concepts, ideas and experiences that we have internalised personally, as opposed to the formalised knowledge we have learnt that is often decontextualised (Wheelahan, 2007). For many in today's technology rich, rapidly changing, networked society, personalised learning has acquired more value than anything that can be offered by organisations. Person-specific, individualised knowledge trumps generic knowledge that was suited to the needs of the industrial era.

Bates (2009) reinforced the view that generic, academic knowledge is no longer enough to meet the needs of the networked society:

"...it is not sufficient just to teach academic content (applied or not). It is equally important also to enable students to develop the ability to know how to find, analyse, organise and apply information/content within their professional and personal activities, to take responsibility for their own learning, and to be flexible and adaptable in developing new knowledge and skills. All this is needed because of the explosion in the quantity of knowledge in any professional field that makes it impossible to memorise or even be aware of all the developments that are happening in the field, and the need to keep up-to-date within the field after graduating."

Lyotard (1984) went further, suggesting that the boundaries between disciplines are eroding (many consider that they were always a false distinction anyway), and that traditional forms of knowledge transmission would be supplemented (and in some cases supplanted) by new methods of knowledge acquisition through technology. 30 years on, Lyotard's predictions are uncannily accurate. Citizen journalism for example, is rapidly becoming a key component of contemporary news reporting, appearing in many major TV News channel broadcasts. Everyone who has a smart phone it seems, is a potential photo journalist. Wikipedia has for many replaced Encyclopaedia Britannica as the first port of call for knowledge acquisition. The fact that anyone with an internet connection can now contribute to knowledge is anathema to those who believe that knowledge generation should be the sole preserve of experts (Keen, 2007). Regardless of any such objections, user generated content is the dominant form of knowledge available on the web, and continues to grow. The checks and balances being implemented by the likes of Wikipedia are attempts to ensure that such knowledge is accurate and relevant. The users themselves will ensure that it is kept up to date. As Kop (2007) points out, 'knowledge is no longer transferred, but created and constructed', and that 'the validity of knowledge has become judged by the way it relates to the performance of society' (p. 193).

Are we witnessing the demise of the knowledge gate-keepers? Will we now see a decline in the Ivory Tower mentality that for centuries has held sway on learning for higher education? And how responsible is technology as a disruptor of this old paradigm of knowledge representation? Who is now in control of knowledge? We all are. What we do with that knowledge will determine the future of education.

References

Bates, T. (2009) Does technology change the nature of knowledge? Online Learning and Distance Learning Resources (Online publication)

Guy, T. (2004) Guess who's coming to dinner? cited in Kop, R. (2007) Blogs and wikis as disruptive technologies: Is it time for a new pedagogy? in M. Osbourne, M. Houston and N. Toman (Eds.) The Pedagogy of Lifelong Learning. London: Routledge.

Keen, A. (2007) The cult of the amateur: How today's Internet is killing our culture and assaulting our economy. London: Nicholas Brealey Publishing.

Kop, R. (2007) Blogs and wikis as disruptive technologies: Is it time for a new pedagogy? in M. Osbourne, M. Houston and N. Toman (Eds.) The Pedagogy of Lifelong Learning. London: Routledge.

Kress, G. (2009) Literacy in the New Media Age. London: Routledge.

Lankshear, C. and Knobel, M. (2006) New Literacies: Everyday practices and classroom learning. Maidenhead: Open University Press.

Lyotard, J. F. (1984) The post-modern condition: A report on knowledge. Manchester: Manchester University Press.

Wheelahan, L. (2007) What are the implications of an uncertain future for pedagogy, curriculum and qualifications, in M. Osbourne, M. Houston and N. Toman (Eds.) The Pedagogy of Lifelong Learning. London: Routledge.

Photo by Steve Wheeler

Beneath the facade... by Steve Wheeler is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Unported License.

I pointed out recently that many of the older theories of pedagogy were formulated in a pre-digital age. I blogged about some of the new theories that seem appropriate as explanatory frameworks for learning in a digital age. These included heutagogy, which describes a self-determined approach to learning, a new model of peer-peer learning known as paragogy, a post modernist 'rhizomatic' learning explanation, distributed learning and connectivist theory, and also a short essay on the digital natives/immigrants discourse. I questioned whether the old models are anachronistic.

Is it now time for these new theories to replace the old ones? Do we need them to describe and frame what is currently happening in an age where everyone is as connected as they wish to be, where social media are the new meeting places, and where mobile telephones are pervading every aspect of our lives. Are the old theories still adequate to describe the kinds of learning that we witness today in our hyper-connected world? Do Vygotsky's ZPD theory or Bruner's Scaffolding model still cut the mustard? Or can they work together with the new theories to provide us with a basis to understand what is happening. How can we for example describe learning activities such as blogging, social networking, crowd sourced learning, or user generated content such as Wikipedia and YouTube using older theories? How might we begin to understand the issues surrounding folksonomies, peer learning, or collaborative informal learning that seem to occur spontaneously, outside the classroom, spanning the entire globe - using old theories that were written to describe what happens in a classroom? Sure, I'm being deliberately provocative here, but it's needed discussion: Are the old models adequate, or are any of the new theories that are emerging more apposite, or more fit for purpose?

I finally got around to creating a slideshow that highlights some of the above issues, and features many of the theories I have previously written about. I stated in the presentation that theories are important for at least two reasons: Firstly they enables us to explain what we are seeing from a perspective. Secondly, they can inform and justify our professional practice as teachers. I suggested that although the new theories are useful, we still need to take transformational learning theories into account, and we need to reconsider some of the social learning theories that we are already familiar with. I created the slideshow below as an accompaniment to an invited webinar I presented for ELESIG - hosted by the University of Nottingham. I will be interested in your views.

|

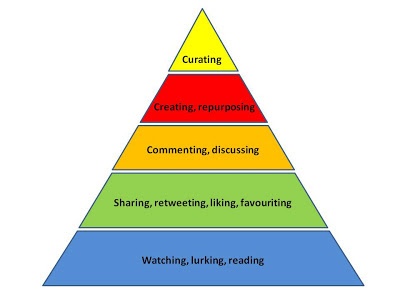

The Engagement Pyramid

(Adapted from Altimeter Group)

|