Reading Boellstorff (2008) and his stories of virtual world encounters.... I have some related observations. This may get a bit deep and multi-layered.. I like layers of storytelling and meaning :-)

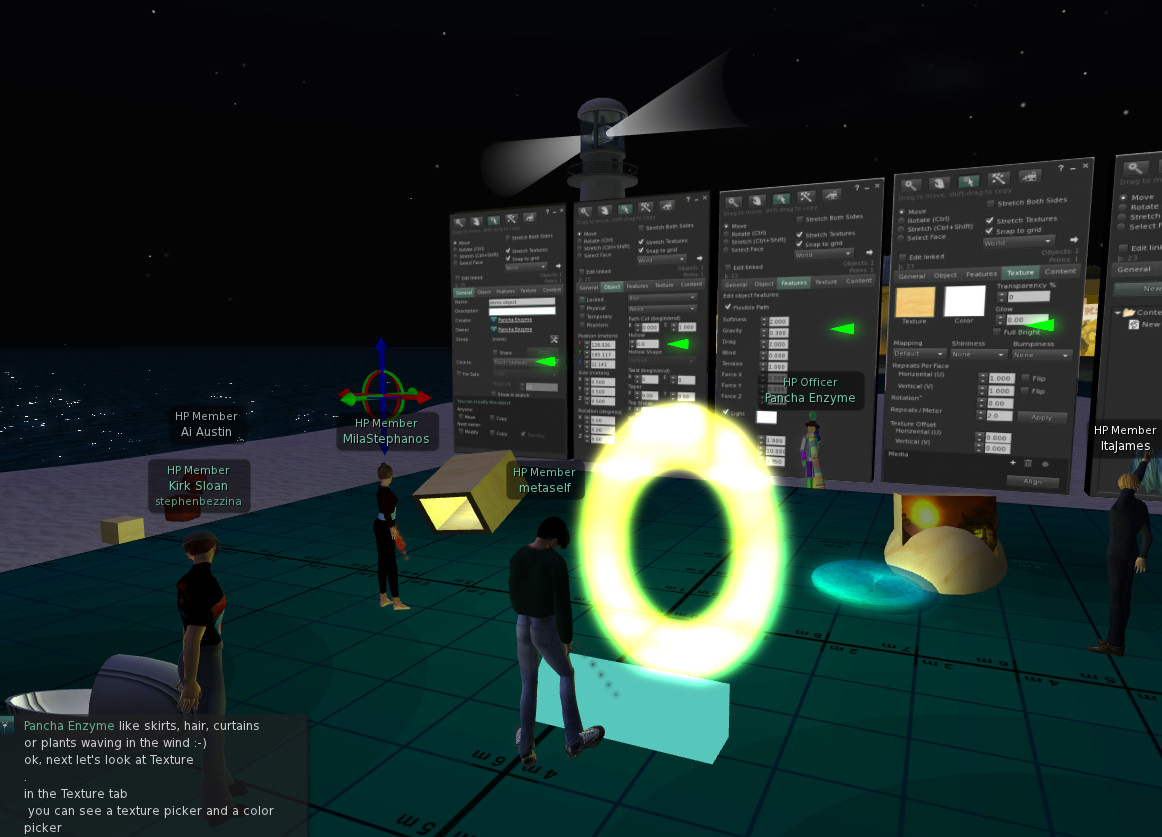

Some of you may (or may not) have noticed that my avatar changed appearance during the Second Life building tutorial this week. My normal bearded avatar and flight suit outfit (there is a whole history behind that too) .. changed to be a little red round ball. Why?

When "I" (Austin) am "he" (Ai) he normally shows attention and is responsive to what is happening around. I do not like "busy" and "afk" indicators and prefer to log out - or go elsewhere in world. I am not happy to leave my avatar unattended and feel it would be rude to do so... though I have no problem with others adopting that style of use of virtual worlds.

For a few years I used some text only and mobile device or low bandwidth non-graphical clients like Radegast and iPad's Pocket Metaverse. I was always unhappy that I had no idea what my avatar would look like, how it would be positioned, that it might face wrongly to those I interacted with, and it was difficult to make the avatar appear such that it was clear I was on a text chat/IM only client.

So I put some effort into designing an avatar that reflected this state of affairs. This was a Personal Satellite Assistant (PSA)... a real device NASA is working on for the Space Station that uses AI technology. It acts as an assistant to relay messages, give instructions and help, and record via camera things going on in experiments in the Space Station. It hovers near astronauts to help them, or can be sent to perform tasks. It has a screen on its front to show astronauts images, video, messages, etc. I have explicit permission from NASA Ames Research Lab to use the image of the skin of this device in my work and in virtual worlds .

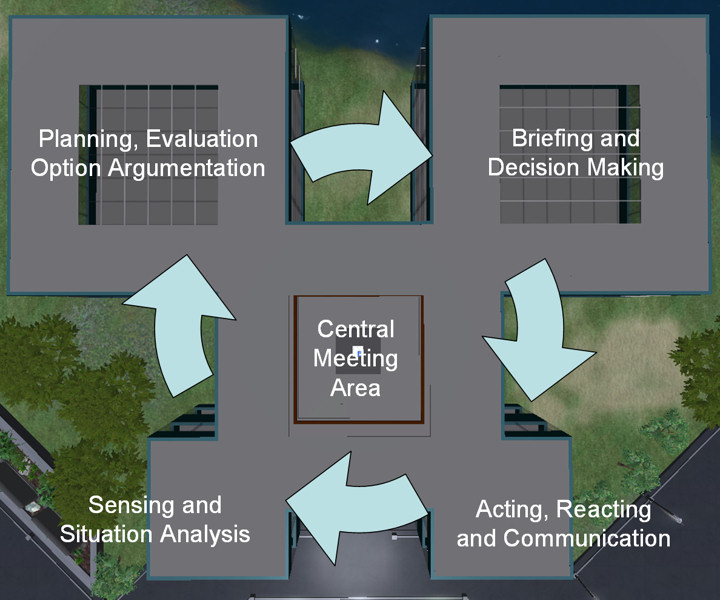

I have used a sphere with this PSA skin for a number of AI driven and autonomous devices in Second Life for several years. Enter any I-Room (http://openvce.net/iroom) and there will usually be one at the entrance to act as a greeter or sensor sending back visitor and status information to our intelligent system over the web.

So I created the Ai PSA Avatar with the PSA shape, size and skin, and showed on the screen a portrait image of "Ai" to show its him that is watching as if over a video teleconference link - i.e. not immersive and "in world" fully.

Even though not on a low bandwidth or text client at the SL building tutorial, my attention was elsewhere. In fact my camera was not even in the same region as the tutorial space. I was looking at an object in a distant region that had the properties I wanted to copy to replicate a complex object I did not know how to build. But I did not feel comfortable just leaving "Ai" unattended... and did not want to fly away to get the information. I have the same issue when I am looking at web pages, or using other applications alongside the Second Life viewer. This was a case when it felt exactly right to use the Ai PSA avatar.

I see this as "Ai" looking through the "PSA" robot floating in the meeting space... "I" am behind "Ai" but its "Ai" that is disconnected from the meeting space.

Boellstorff, T. (2008). Personhood. In Coming of Age in Second Life (pp. 118-150). Oxford: Princeton University Press.

[First posted on IDEL11 Discussion Forum, 19-Oct-2011]